Instructional Rounds Observation Tool

Transforming ambiguous instructional expectations into a usable decision-support tool for leaders and teachers.

What you’ll see

The core misconception I helped address: “self-direction = students on their own”

The methods behind the tool (classroom observations, interviews, best-practice synthesis)

The tool design itself: observable “look-fors” and practical teacher moves leaders can use to coach teachers

My implementation work: onboarding other coaches/account managers and leading rounds in schools

The impact story: improved walkthrough feedback quality and clarity of the teacher’s role

Organization: Gradient Learning (supporting Summit Learning implementation)

Timeline: 2018–2019

My title: Instructional Specialist and Research Lead

My role: Key contributor (research + synthesis + tool refinement) and implementation lead (enablement + onboarding)

Scale: Used across ~400 schools; targeted rollout in ~15 strategic districts (~50 schools) Methods: ~25 classroom observations; ~15 interviews; best-practice scan + synthesis Deliverable: Instructional rounds observation tool / guide + onboarding for Success Managers / instructional coaches

Outcomes (reported): Clearer expectations for teacher facilitation; improved walkthrough look-fors and feedback; increased student engagement (leader-reported). Longer-term signal: 72% of school leaders strongly agreed they were very satisfied with implementation support from their Success Manager / Instructional Specialist (related indicator, not direct attribution).

The problem

In many schools implementing Summit Learning, “self-direction” was often misunderstood as students working independently with minimal teacher involvement. Teachers and leaders lacked a shared, observable understanding of what strong facilitation looked like, so instructional rounds frequently produced inconsistent feedback and missed opportunities to improve classroom practice.

What we needed to learn

What should teachers be doing during self-direction and project-based learning work time?

What should students be doing?

What evidence should leaders look for during walkthroughs?

How can leaders translate observations into actionable feedback?

What I owned

I contributed to the initial research and tool design, then owned refinement based on classroom observations and user feedback. After the tool was developed, I led onboarding and enablement for other account managers and instructional coaches so they could use it effectively with their school and district partners.

Research approach

We triangulated across three inputs:

Classroom observations of strong practice to capture high-leverage teacher and student behaviors in context.

Interviews with program designers and instructional thought partners to clarify the intended instructional model and teacher role.

Best-practice research to validate and strengthen the behavioral look-fors and supports.

What we built

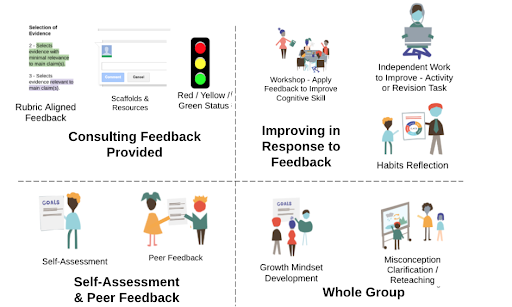

We created an instructional rounds observation tool that made effective facilitation visible and actionable, giving leaders a shared set of look-fors and language for feedback.

A key design feature was turning an ambiguous question - “What should teachers do during self-directed learning?” - into concrete, observable options leaders and teachers could use in the moment, such as:

Brief whole-group instruction at strategic points

Targeted small-group workshops

Purposeful monitoring and scaffolding during independent work

1:1 check-ins with students

The guide also included clear descriptions of what strong learning structures should look like (e.g., workshops, independent work), which increased usability for walkthroughs and coaching.

Screenshots shown are from an internal tool used in Summit Learning partner schools during my tenure. The tool has since been retired.

Impact

Leaders reported the tool improved the efficacy of instructional rounds and made feedback to teachers more specific and actionable, helping correct the misconception that teachers are not central to self-directed learning. In implementation, leaders also reported increased student engagement across diverse school contexts.

Because success metrics were not collected specifically for this tool (and I was not the sole project lead), I present impact in two layers:

Direct (qualitative): leader-reported improvements in rounds and feedback quality; clearer shared expectations for teacher facilitation.

Adjacent (quantitative signal): later leader satisfaction with implementation support (72% strongly agree) as a related indicator of the broader support model.

What I learned

This project reinforced how powerful it is to make the invisible visible. When expectations are left implicit, especially in complex instructional models, implementation becomes inconsistent and feedback becomes subjective. Turning an abstract idea like “self-direction” into observable behaviors and practical look-fors created a shared language for teachers and leaders and made walkthroughs more useful. It also reminded me that a tool is only as good as its uptake. The work was not just designing the resource, but refining it based on real use and supporting adoption through onboarding so it could actually change day-to-day practice.